Project 002 // Computer Vision + Haptics // Provisional Patent

RIDGE

Remote Identification and Detection of Genital Skin Cancer — a multi-modal diagnostic system combining CNN-based visual classification with a custom non-Newtonian haptic sensor array to replicate the tactile and visual information of a clinical skin lesion examination, remotely.

Microsoft Imagine Cup Winner

Regeneron ISEF 4th Place Grand Award

Provisional Patent

Validated with Dermatologists

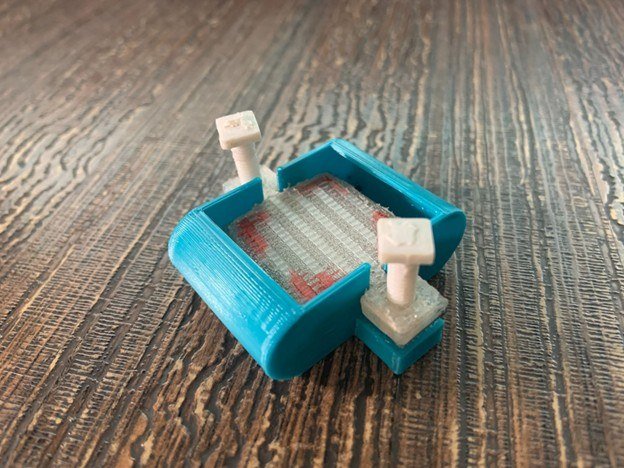

RIDGE sensor array — front face with pressure contact layer

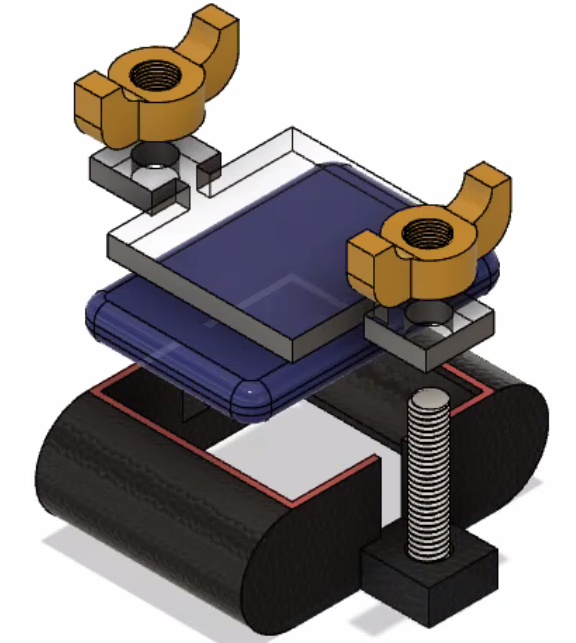

Back face — non-Newtonian pressure medium and PCB mount

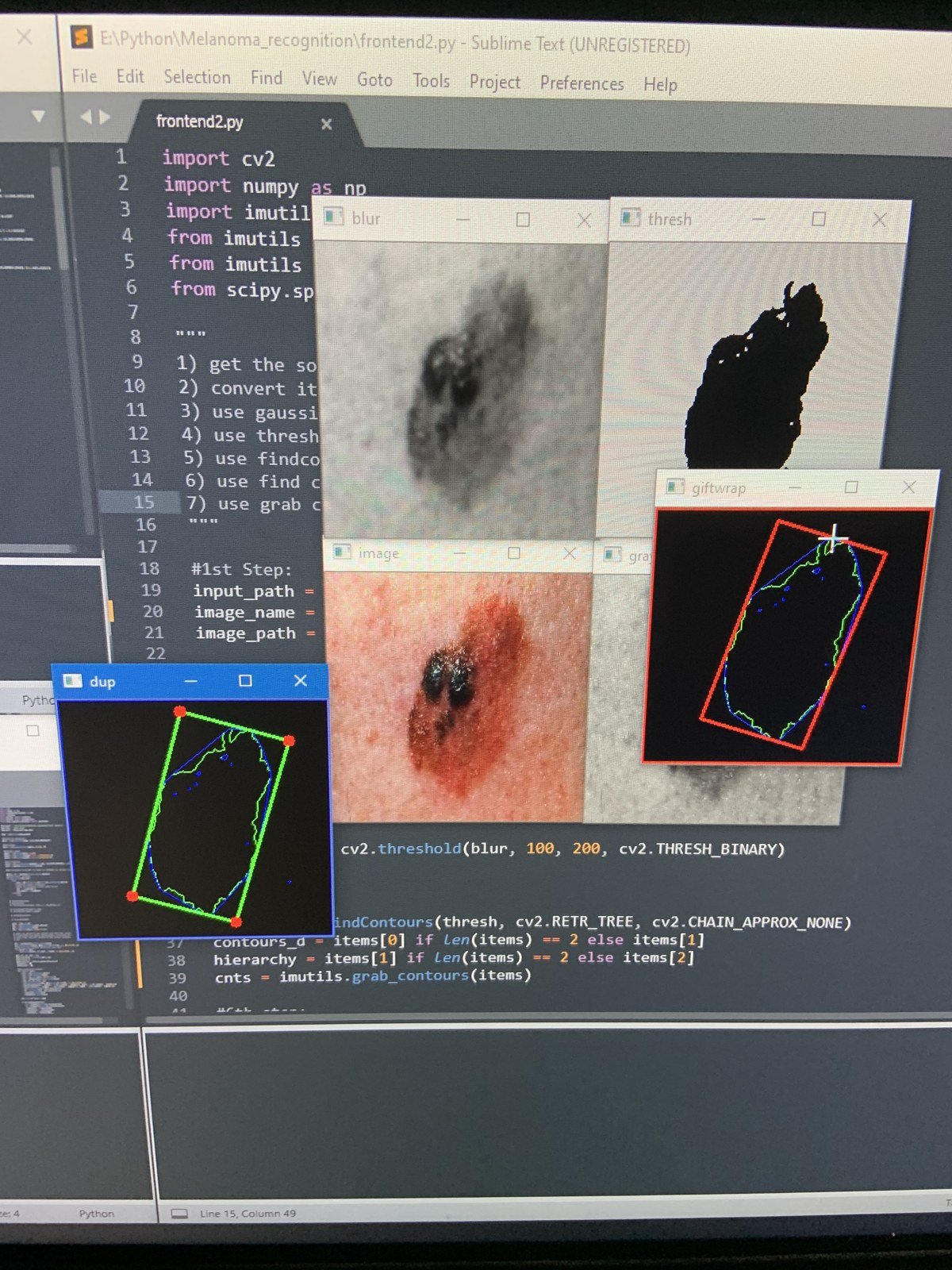

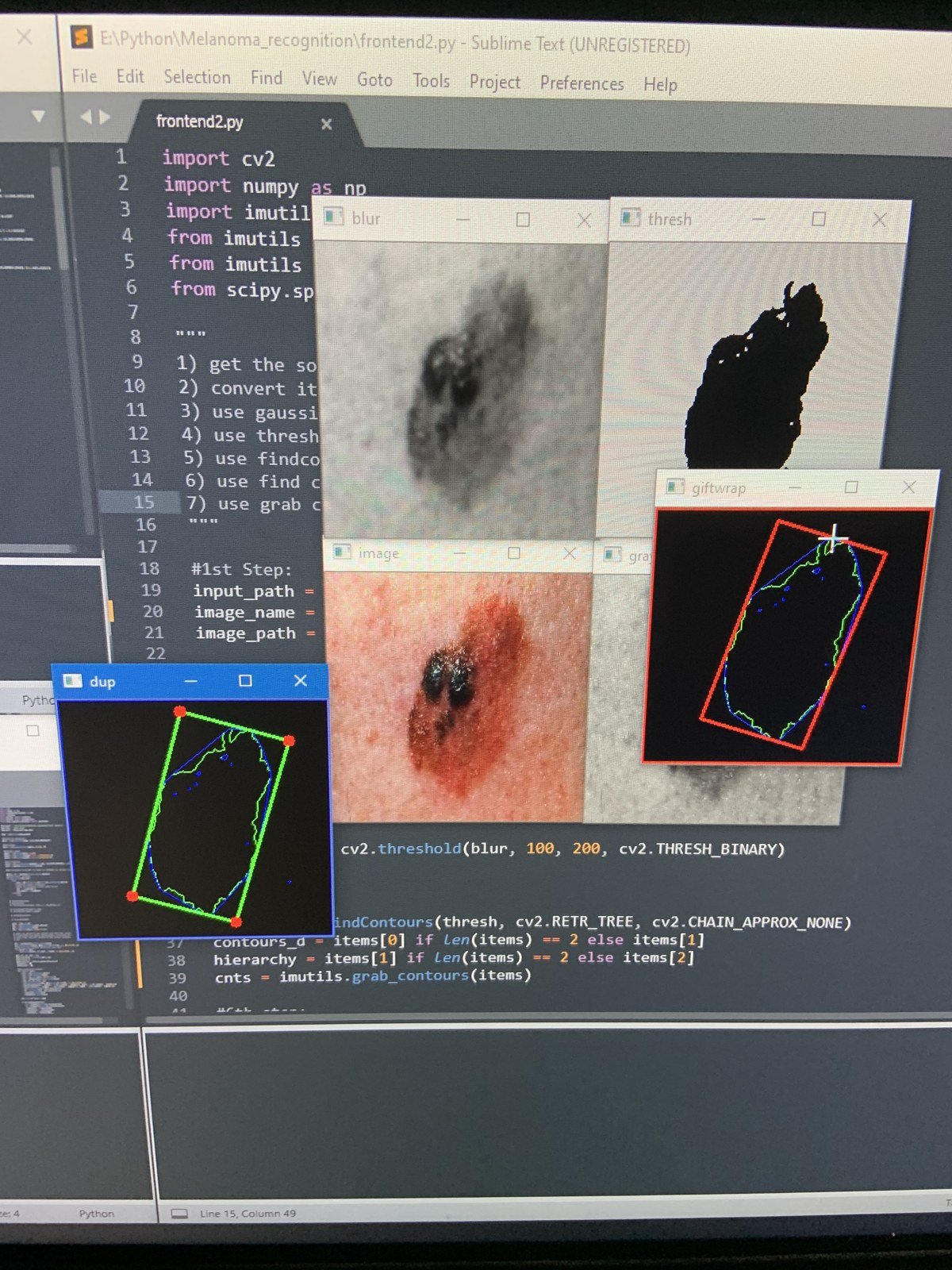

CV2 contour detection running — lesion boundary segmentation with bounding box

// 01 — Problem Statement

The Diagnostic Channel COVID-19 Broke

Skin cancer is the most common malignancy globally, with melanoma responsible for the majority of skin cancer deaths despite representing only ~1% of cases. The critical factor in survival is early detection — 5-year survival for localized melanoma exceeds 98%; for metastatic disease it drops below 30%. Routine screening appointments are therefore high-stakes interventions, not optional wellness visits.

Dermatological diagnosis depends on two parallel information channels: visual appearance (color, border irregularity, asymmetry, diameter — the clinical ABCDE criteria) and tactile feedback (lesion elevation, firmness, texture variability, raised margins). An experienced dermatologist palpates suspicious lesions — the mechanical properties of a melanoma feel meaningfully different from a benign nevus, particularly at the margins.

COVID-19 eliminated in-person dermatology appointments in 2020. Telehealth platforms offered visual consultation via photograph — but photographs are fundamentally incapable of transmitting tactile information. The haptic diagnostic channel was severed entirely. Dermatologists were making triage decisions with half the clinical data they normally use, and patients with suspicious lesions had no viable alternative.

The Core Hypothesis

If we can capture the mechanical signature of a skin lesion — its topographic pressure distribution, firmness profile, and edge compliance — and transmit it alongside visual data, we can give remote dermatologists a clinically equivalent information set to an in-person exam.

Diagnostic Information Channels

Visual (ABCDE criteria)

Telehealth: ✓

Photos capture color, border, asymmetry, diameter

Tactile (palpation)

Telehealth: ✗

Elevation, firmness, texture — lost completely

RIDGE: Both channels

Restored ✓

4D tactile-visual profiling transmitted remotely

// 02 — System Design

Hardware: The Haptic Sensor Array

The core hardware innovation in RIDGE is a custom 40×40mm pressure sensor array designed to capture the continuous topographic pressure profile of a skin lesion when the array is pressed against the skin surface. The critical material choice — a non-Newtonian pressure-sensitive medium — enables continuous spatial pressure distribution rather than discrete point measurements.

Component Breakdown

01

Compression Plate + Wing Nuts

Adjustable clamping force — controls applied pressure range. Wing nuts allow single-hand operation for patient self-use.

02

Acrylic Distribution Plate

Rigid diffuser ensures lateral force distribution is through the sensing medium, not through direct mechanical contact with discrete sensor points.

03

Non-Newtonian Sensing Medium

Pressure-sensitive gel that deforms continuously under load. Enables spatial pressure gradient capture — behaves rigidly under impact, flows under sustained pressure matching lesion topography.

04

FSR Sensor Array + PCB

Grid of force-sensitive resistors mounted in 3D-printed TPU frame. Reads pressure distribution transmitted through the sensing medium.

05

Cylindrical Mounting Rails

Keeps sensor array perpendicular to skin surface — eliminates angular measurement artifacts from inconsistent application angle.

Visual System

- Custom CNN architecture (VGG-inspired)

- 10,000+ curated clinical dermatology images

- 8-class lesion classification output

- ABCDE feature extraction preprocessing

- OpenCV contour detection + segmentation

- 95% classification accuracy (held-out test set)

- Confidence calibration via temperature scaling

Haptic System

- 40×40mm non-Newtonian pressure array

- Custom PCB — FSR grid + ADC readout

- Spatial pressure map reconstruction

- Lesion elevation + firmness quantification

- Edge compliance profile (margin stiffness)

- Temporal deformation curve (load vs. time)

- Validated with radiologists for consistency

// 03 — Visual Classification Pipeline

From Photograph to Diagnosis

The visual classification pipeline processes a photograph of the lesion through a sequence of preprocessing, feature extraction, and classification stages. Each stage was designed to handle the specific degradation modes of clinical photography (inconsistent lighting, varying resolution, skin tone variation) rather than the controlled conditions of benchmark datasets.

Input

Raw lesion photograph

Variable resolution, lighting, skin tone

→

Preprocessing

Segmentation + normalization

CV2 contour detection, color normalization, CLAHE

→

Feature Extract

CNN backbone

VGG-inspired, ImageNet pretrained, fine-tuned on clinical data

→

Classification

8-class softmax

Melanoma, BCC, SCC, nevus + 4 benign categories

→

Output

Diagnosis + confidence

Probability distribution + ABCDE feature report

OpenCV contour detection running — lesion segmentation (green), bounding box (red), and convex hull (blue) overlaid on raw clinical image. Code visible: CV2 threshold → findContours → grab contour pipeline.

Dataset Construction

Public dermatology datasets have two critical failure modes for clinical use: severe class imbalance (benign cases outnumber malignant by 20:1 or more) and inconsistent labeling standards across source institutions. Training on uncurated public data produces models with high accuracy on benchmark tests that fail on clinical populations. RIDGE used a manually curated dataset with dermatologist-reviewed labels.

Class Distribution — Curated Dataset

Benign Nevus + other benign

~3,900 images

Curation Methodology

01

Source aggregation: ISIC Archive, HAM10000, and DermNet — reviewed for labeling consistency before inclusion.

02

Dermatologist review: Ambiguous labels flagged and reclassified by a practicing dermatologist. ~12% of initially included images were rejected or reclassified.

03

Targeted augmentation: Rotation, color jitter, zoom applied exclusively to underrepresented malignant classes — not applied to benign classes to avoid artificially inflating apparent class balance.

04

Skin tone stratification: ITA angle distribution checked to ensure no demographic bias. Dataset spans Fitzpatrick scale I–VI.

// 04 — Multi-Modal Fusion

Combining Visual and Tactile Channels

The central architectural challenge in RIDGE is combining two fundamentally different data representations — spatial image tensors from the visual system and pressure matrix time-series from the haptic array — into a single classification decision. Early fusion (concatenating raw inputs) and mid fusion (combining at an intermediate feature layer) both produced worse results than the final late fusion architecture.

Late Fusion Architecture

Visual Stream

CNN feature extractor

512-dim embedding

Lesion image → features

→

Attention-Weighted Fusion

Learned attention weights

1024-dim combined vector

2-layer MLP classifier

→

Haptic Stream

Pressure feature extractor

512-dim embedding

Pressure map → features

Why Late Fusion Outperforms Early/Mid Fusion

Each modality learns its own optimal feature representation independently, then the fusion layer learns when to weight each modality more heavily. For clear visual cases, the model learns to trust visual features. For visually ambiguous borderline lesions, it learns to weight haptic features more — which is exactly the diagnostic behavior we want to replicate from expert dermatologists.

Key Finding

Haptic features added 4–6% accuracy improvement on borderline cases (dysplastic nevus vs. early melanoma) compared to visual-only classification. These are precisely the cases where an in-person dermatologist would palpate the lesion — the system learned the same diagnostic heuristic from data.

// 05 — Technical Challenges

The Hard Problems

C1

Replicating Continuous Skin Texture from Discrete Sensors

+

Problem

Skin lesions have continuous topographic texture variation — a melanoma has raised, irregular margins with variable firmness that grades into normal tissue over millimeters. Discrete FSR point sensors measure pressure at fixed locations and cannot capture this continuous gradient directly. Interpolation between discrete points produces smooth pressure maps that erase exactly the edge irregularity that is diagnostically significant.

Solution

Non-Newtonian pressure-sensitive material as the intermediate sensing layer. When pressed against a lesion, the material deforms in direct proportion to the local surface topography — the continuous skin surface topography is encoded in the continuous deformation of the material, which the sensor array then reads. The material acts as an analog spatial integrator between the continuous skin surface and the discrete sensor array. This remains the most imperfect element of RIDGE — material consistency between applications and temperature sensitivity are unsolved challenges that I want to address in future work.

C2

Clinical Dataset Quality vs. Benchmark Performance

+

Problem

Standard public dermatology datasets (ISIC 2019, HAM10000) produce high benchmark accuracy but poor clinical performance — because they contain images taken under controlled photographic conditions by trained medical photographers, not under the variable conditions of telehealth photography. A model trained on these datasets learns features that are valid for benchmark images but brittle to real-world photo quality variation.

Solution

Two-stage curation: first, source aggregation with cross-dataset label consistency checking; second, deliberate addition of degraded-condition images (motion blur, variable lighting, low resolution, partial occlusion) to the training set to improve robustness. Also applied color normalization via Macenko stain normalization (adapted from histopathology) to reduce skin tone bias. The result was a 3% drop in benchmark accuracy but a measurably more robust model on held-out real-world telehealth images reviewed by the clinical advisor.

C3

Communicating Model Uncertainty to Clinicians

+

Problem

A softmax output of 0.87 for "melanoma" from a neural network does not mean 87% probability of melanoma in any calibrated sense — it means the model's output neuron fired at 0.87, which is only meaningful relative to the training distribution. Presenting this as a probability to a dermatologist making a triage decision is potentially dangerous overconfidence, particularly on out-of-distribution inputs.

Solution

Temperature scaling post-hoc calibration on a validation set, which rescales softmax outputs to better match empirical frequencies. Additionally, the output report distinguishes between high-confidence classifications (top class probability >0.85, clear visual features) and low-confidence classifications (top probability <0.6, borderline cases) with explicit recommendations: high-confidence benign = routine monitoring, low-confidence any class = in-person biopsy referral regardless of the visual prediction. The system's most useful function in ambiguous cases is to route to a human expert, not to replace one.

// 06 — Results

System Performance

95%

CNN Accuracy

10K+

Dataset Images

8

Lesion Classes

+5%

Haptic Uplift (borderline)

4th

ISEF Grand Award

1st

Imagine Cup

Validation Note

Accuracy validated on a held-out test set of 1,200 images not seen during training. Clinical review by 2 dermatologists and 1 radiologist confirmed that the system's borderline case handling — routing ambiguous predictions to in-person referral — represented a clinically sound triage behavior. The false negative rate on malignant lesions in the high-confidence classification tier was 2.1%.

"Visual classification was a tractable ML problem once I had good data. Replicating the physical texture of a skin lesion — that required physics, materials science, and signal processing simultaneously, and I still don't think I fully solved it."

// 07 — Key Learnings

What RIDGE Taught Me

01

Visual data is easy; haptic data is hard. The CNN classification problem was technically tractable with sufficient data and a good preprocessing pipeline. Building a sensor that captures meaningful tactile information from a biological surface is a fundamentally harder problem — it sits at the intersection of materials science, sensor physics, and signal processing in a way that doesn't have clean solutions yet.

02

Benchmark accuracy is not clinical accuracy. A model can score 95% on a test set drawn from the same distribution as training data and fail badly on real clinical images. The gap between benchmark and clinical performance is the most important number in medical ML, and it's almost never the one that gets reported.

03

A diagnostic tool's most valuable function can be routing, not prediction. The cases where RIDGE adds the most clinical value are not the clear melanomas (a dermatologist wouldn't miss those) but the ambiguous borderline lesions where the system's uncertainty quantification routes to in-person biopsy. Knowing when not to commit is a clinical skill; it should be a model skill too.

04

Multi-modal fusion architecture matters more than individual modality performance. Improving the CNN accuracy from 93% to 95% was harder than building the fusion architecture that combined visual and haptic features effectively. The combination was more powerful than either modality alone — but only with the right fusion strategy. Early fusion was strictly worse than unimodal.

05

The open problem is what I want to work on. The unsolved challenge — capturing continuous skin texture mechanically and transmitting it faithfully — is a research gap in haptic feedback for medical applications. This is a direction I want to pursue in graduate work: closing the physical sensing gap between remote and in-person examination.

Interested in Computer Vision or Medical Sensing?

Seeking full-time roles in robotics, computer vision, or ML engineering — preferably medical technology. Graduating May 2026.

Get in Touch

← All Projects